Highlighted Research

Full list available on Google Scholar.

*Equal Contribution

-

-

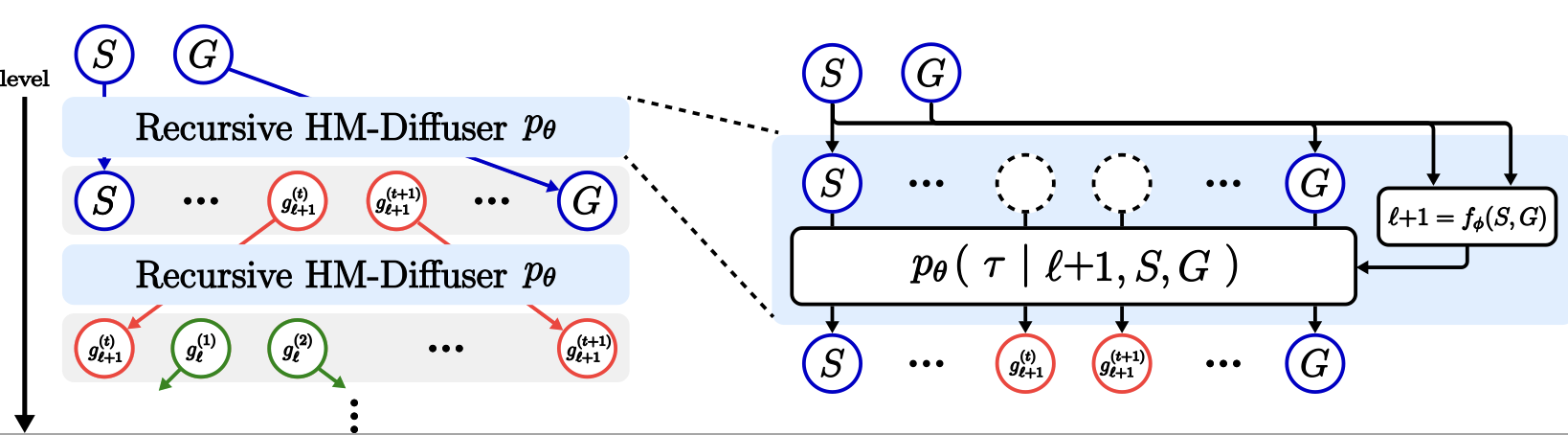

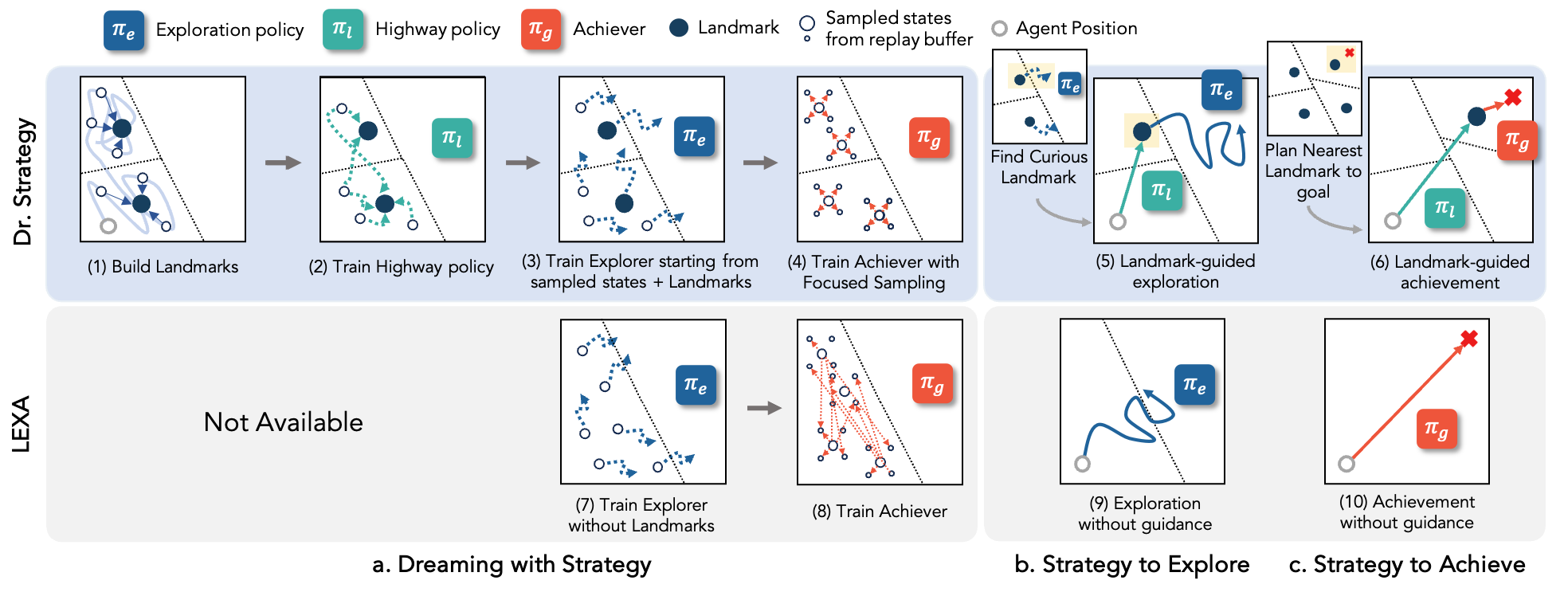

Dr. Strategy: Model-Based Generalist Agents with Strategic Dreaming

Hany Hamed*, Subin Kim*, Dongyeong Kim, Jaesik Yoon, Sungjin Ahn.

ICML

Research Experience

-

Artificial and Mechanical Intelligence Lab, IIT Genoa, Italy

— Present- Developing torque-control humanoid locomotion policies using reinforcement learning..

- Designing and implementing RL experiments for ErgoCub with IsaacLab.

- Building a Sim-to-Real transfer framework to enable robust humanoid locomotion.

-

University of Alberta & Amii (Remote)

— Present- Assisted in research on mask-based goal representation for robot learning, conducting experiments with multiple open-vocabulary object detection models to assess their effectiveness.

- Investigated model-based (TD-MPC2) and model-free (SAC) reinforcement learning for Sim-to-Sim transfer with finetuning in the target domain.

-

Machine Learning & Mind Lab, KAIST, Daejeon, South Korea

—- Researched zero-shot task generalization in model-based RL, leading to a state-of-the-art agent.

- Explored intrinsic motivation in hierarchical model-based RL, focusing on how high-level policies guide low-level policies.

- Worked on diffusion-based planning for long-horizon tasks while learning on short trajectories.

-

Unmanned Technology Laboratory, Innopolis University, Russia

—- Developed ROS packages for drone modules including gimbal control, RTK GPS, and payload control.

- Integrated Livox lidar with lab’s drone for outdoor mapping.

- Developed a handheld Lidar device for indoor/outdoor mapping for an industrial partner.

-

Center of Robotics, Innopolis University, Russia

—- Conducted RL experiments for a stabilizing control policy of a tensegrity hopper.

- Designed and implemented a contactless differentiable physics simulator for tensegrity robots using Taichi.

- Performed research on sim2real transfer for a three-prism tensegrity robot.

Education

-

School of Computing, KAIST

Computer Science, Innopolis University

–Service

Reviewer

- Conferences: ICLR 2024, 2025, IROS 2024, ACML 2024, Humanoids 2025, AAAI 2026

- Workshops: ICML AutoRL 2024

- Journals: IEEE RA-L 2024

Teaching Assistant

-

CS492: Deep Reinforcement Learning

-

Introduction to ROS

Awards

- 2024 – ICML Travel Grant

- 2022–2024 – KAIST Graduate School Full Scholarship (Master)

- Fall 2020 – Outstanding Achievements, Innopolis University

- 2020, 2021 – Outstanding Contribution to Science, Innopolis University

- June 2021 – 3rd Place, DOTS Competition (Bristol Robotics Lab & Toshiba)

- 2018–2022 – Innopolis University Full Scholarship (Bachelor)